TL;DR: No LLM provider tells you what a model can do via API. So frameworks build their own registries. LiteLLM maintains a 2600+ entry model_cost_map, LangChain pulls from a third-party database (models.dev), and smaller projects just hardcode lists. None of this comes from the provider. A single capabilities field on /v1/models would fix this at the source.

The problem

I was building AgentU and hit this: before sending an image to a model, I need to know if it supports vision. Before using response_format: { type: "json_object" }, I need to know it supports structured output. Before calling tools, I need to know it supports function calling.

How do you find out? You don’t. Not from the API anyway.

You check the docs. Or you hardcode a list. Or you try and catch the error. That’s the state of things in 2025.

What LiteLLM had to build

LiteLLM supports 140+ providers and 2600+ models. To know what each model can do, they maintain a community-updated model_cost_map, a JSON registry mapping model names to capabilities, pricing, and limits:

# These work because LiteLLM maintains a client-side registry, not because providers expose it

litellm.supports_vision(model="gpt-4o") # True

litellm.supports_vision(model="gpt-4-turbo") # True

litellm.supports_vision(model="claude-3-opus") # True

litellm.supports_function_calling(model="gpt-4o") # True

Every time a provider ships a new model or adds a capability to an existing one, someone has to open a PR to update this list. LangChain took a different approach with “Model Profiles” backed by models.dev, but the data still lives client-side. It’s pulled from a third-party database, not from the providers themselves. Either way, the provider knows the answer and won’t tell you programmatically.

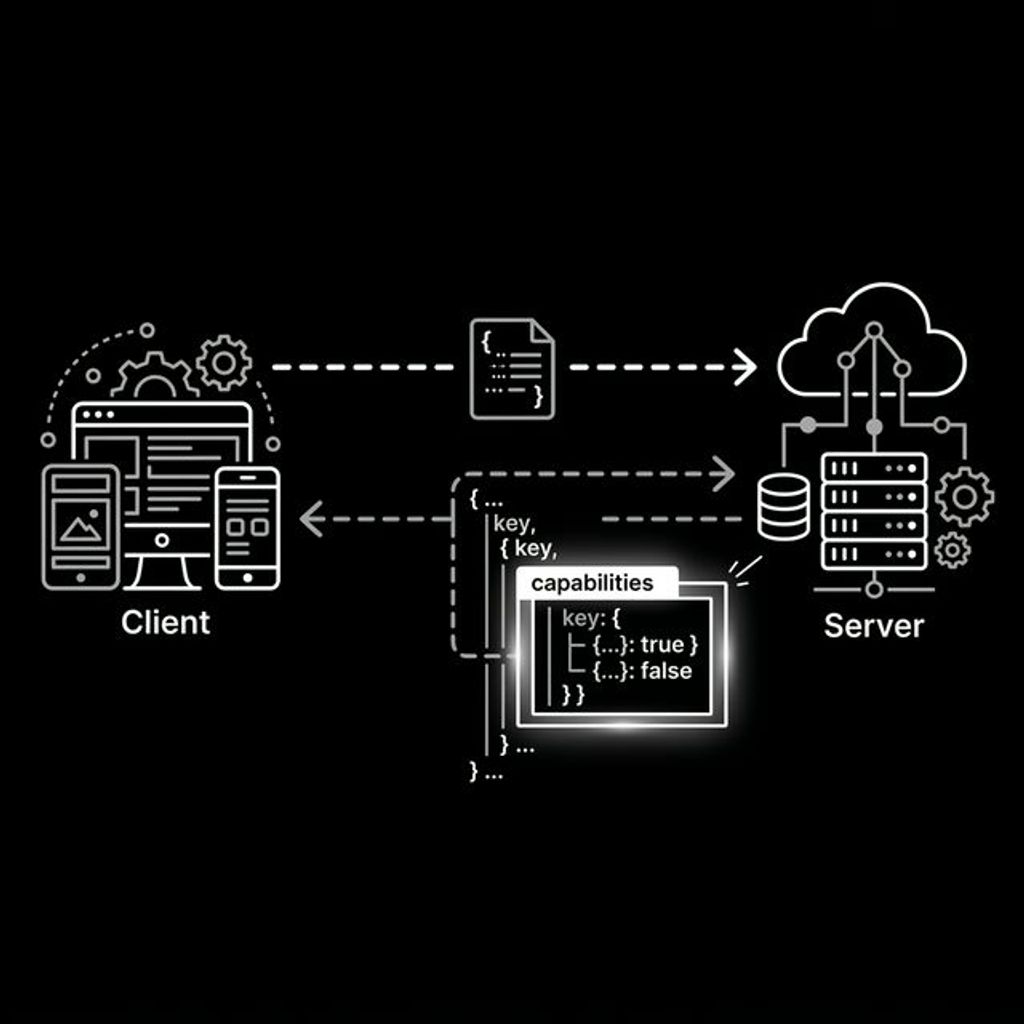

What the API response looks like today

Hit OpenAI’s /v1/models endpoint right now:

{

"id": "gpt-4o",

"object": "model",

"created": 1715367049,

"owned_by": "system"

}

That’s it. An ID, a timestamp, and an owner. No capabilities. No context window. Nothing you actually need to decide how to use the model.

What it should look like

{

"id": "gpt-4o",

"object": "model",

"created": 1715367049,

"owned_by": "system",

"capabilities": {

"text": true,

"vision": true,

"audio": true,

"function_calling": true,

"structured_output": true,

"streaming": true,

"parallel_tool_calls": true,

"json_mode": true

},

"context_window": 128000,

"max_output_tokens": 16384

}

Five extra fields. Fully backward-compatible. Every client that doesn’t check capabilities keeps working. Every client that does gets to drop their hardcoded registries.

Why this matters for agents

Agents make model selection decisions at runtime. An orchestrator might choose gpt-4o-mini for a text task and gpt-4o for an image task. Right now, that routing logic is based entirely on hardcoded assumptions:

# This is how every framework does it today

VISION_MODELS = [

"gpt-5.4",

"claude-opus-4.6",

"gemini-3.1-pro",

"llama-4-maverick",

]

def select_model(task):

if task.has_images:

return next(m for m in available_models if m in VISION_MODELS)

return default_model

With capability discovery, it becomes:

# How it should work

def select_model(task):

models = client.models.list()

if task.has_images:

return next(m for m in models if m.capabilities.get("vision"))

return models[0]

No hardcoded list. No stale config. The model tells you what it can do.

What about non-OpenAI providers?

Anthropic, Google, Mistral: none of them expose this either. The /v1/models format just happens to be the one everyone copies. Ollama, Groq, Together, LM Studio, vLLM all implement /v1/models or something close to it. If OpenAI adds capabilities, the rest of the ecosystem follows within months.

For providers with their own API formats, a /.well-known/model-capabilities.json could work. But that’s the harder problem. Solve the easy one first.

Why not ask the model itself?

A natural counter-proposal: skip the API, just ask the model. “Do you support vision?” But this is a generation task, not introspection. The model will confidently say “yes” whether it can process images or not. That’s not self-awareness — it’s next-token prediction. Self-reporting is hallucination with extra steps.

Three approaches could work, but they all require the provider to be the source of truth:

System-injected context. The provider injects a read-only capability block into every conversation before your messages arrive. The model reads its own spec sheet rather than guessing. This is how models already know their knowledge cutoff date — capabilities are the logical next field.

[SYSTEM-INJECTED, READ-ONLY]

model: gpt-4o

capabilities: text, vision, audio, function_calling, structured_output

context_window: 128000

Built-in capability tool. Give models a get_capabilities() tool that returns provider ground truth. The model calls the tool, gets facts back. No generation, no hallucination. The response comes from the API layer, not the weights.

Response metadata. Return capabilities as a sideband field in the response body, never touching the generation pipeline at all:

{

"choices": [...],

"model_capabilities": {"vision": true, "function_calling": true}

}

All three reinforce the same point: the model can’t tell you what it can do. The provider can. Put it in the API.

The ask

This isn’t a complex spec proposal. It’s one backward-compatible field on an endpoint that already exists.

Providers know their model capabilities. They just don’t expose them. Every framework in the ecosystem is working around this by building client-side registries that go stale.

The fix is small. The impact is large. Ship capabilities on /v1/models.

P.S. - If you’re maintaining one of these model registries, you know the pain. If you’re a provider, you know the answer. Put it in the API.

P.P.S. - I’ve filed a proposal on the OpenAI OpenAPI spec to add a capabilities field to /v1/models. If this resonates, go give it a 👍.

About Hemanth HM

Hemanth HM is a Sr. Machine Learning Manager at PayPal, Google Developer Expert, TC39 delegate, FOSS advocate, and community leader with a passion for programming, AI, and open-source contributions.