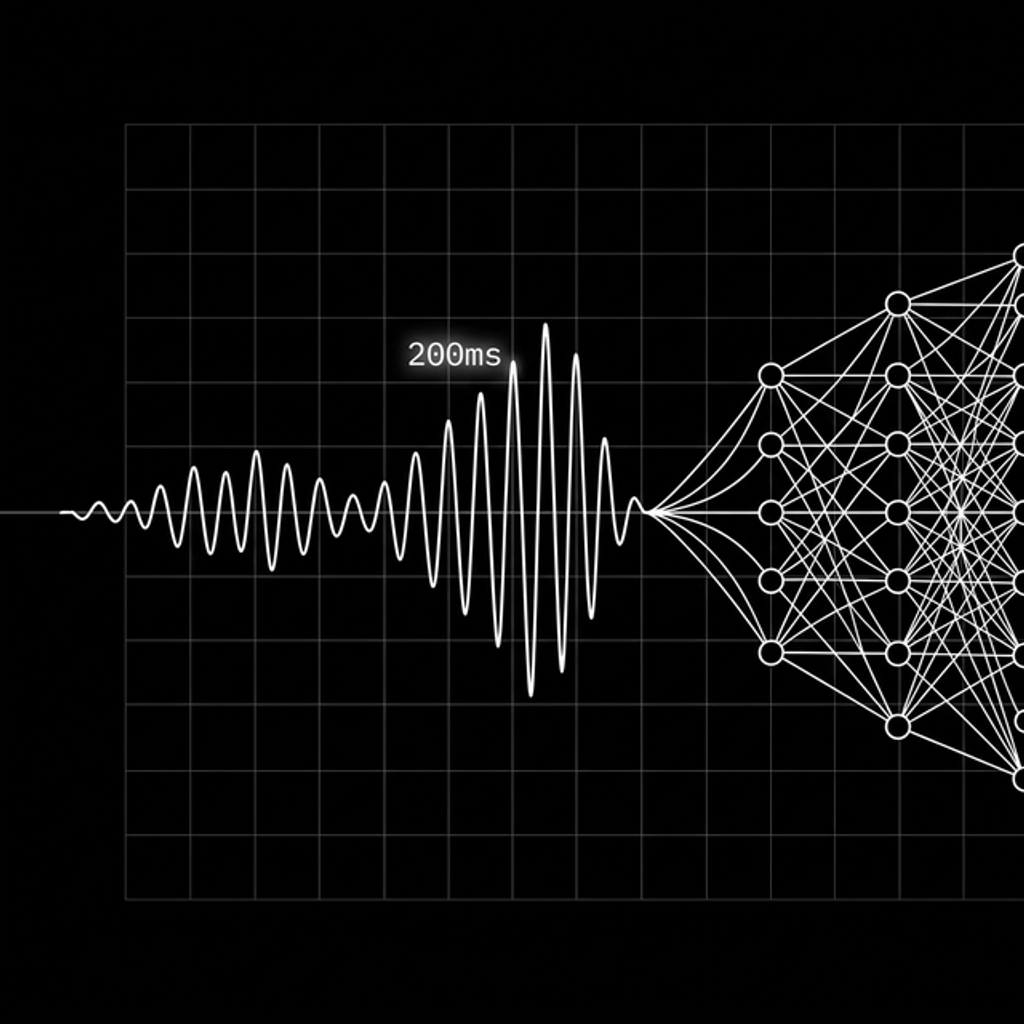

Your brain expects a reply in about 200 milliseconds. That’s not a design guideline somebody came up with. That’s decades of psycholinguistic research across dozens of languages and cultures.

Here’s what I find wild about this: it takes roughly 600ms just to pull a word out of memory and say it. If humans actually waited for someone to finish before starting to think, every conversation would have full-second pauses. But we don’t wait. We predict the end of the other person’s turn and start forming our reply while they’re still talking. 200ms later, we’re already speaking.

Voice AI has to fit into that same window. Not because it’s a nice engineering target, but because the human on the other end will feel something is off if it doesn’t.

The perception spectrum

- < 100ms: you’re being cut off. Too fast.

- 100-300ms: natural. This is how conversation actually works.

- 300-500ms: acceptable. You notice the delay but you tolerate it.

- 500ms-1s: sluggish. The rhythm breaks.

- > 1s: broken. People start repeating themselves.

The real bottleneck

It’s not the LLM. It’s endpoint detection.

Cut the mic too early and you interrupt the user mid-sentence. Wait too long and the response feels slow before the model even gets the input. Most systems settle on about 200-250ms of silence as the trigger.

That 250ms feels like nothing, but it’s already eaten a big chunk of your latency budget before inference even starts.

Two architectures, two trade-offs

Cascaded: STT to LLM to TTS

The traditional pipeline. Audio becomes text, text goes to the LLM, LLM output becomes audio.

Latency math:

Endpoint detection: ~200-250ms

STT processing: ~200-300ms

LLM first token: ~100-500ms

TTS first byte: ~80-120ms

────────────────────────────────

Total: ~600ms - 1200ms

That’s the good case. Real-world conditions add network hops and buffering.

The upside is modularity. You can swap in a faster STT or a different voice without touching anything else. You get text logs for debugging. Complex reasoning works fine because the LLM operates on text, its native format.

The downside: the audio-to-text conversion strips out everything except words. Tone, sarcasm, hesitation, emotion, all gone. The LLM responds to what you said, not how you said it.

Speech-to-speech (S2S)

One model. Audio in, audio out. No intermediate text.

This is what GPT-4o Realtime and Gemini Live use. Response times typically land in the 200-400ms range. Right in the biological sweet spot.

S2S models hear emotion. They handle interruptions natively. When you laugh, the model knows you laughed. When you trail off mid-sentence, it can tell the difference between “I’m thinking” and “I’m done.”

The cost: no text transcript to inspect, debugging is hard, compute is expensive, and training requires massive amounts of paired voice data.

Where the industry is right now

Alexa and Google Assistant target under 500ms with heavily optimized cascaded pipelines.

GPT-4o Realtime and Gemini Live sit around 300ms with S2S.

The gap is closing, but the architectural trade-off remains. Cascaded gives you control. S2S gives you speed.

What makes it feel like a person

Fast responses and clean transcription get you most of the way there. The rest is all the stuff humans do without thinking about it.

Backchanneling. When someone is talking, the listener isn’t silent. They go “mm-hmm”, “right”, “yeah”, tiny signals that say “I’m here, keep going.” Most voice AIs are completely silent while the user speaks. That silence feels like talking into a void. Systems that inject backchannels at natural pause points (after clause boundaries, typically) immediately feel more conversational.

Prosody matching. Humans mirror each other’s speech patterns. If you speak fast, I speed up. If you slow down, I slow down. If you whisper, I lower my voice. Most TTS systems output at a fixed pace and pitch regardless of how the user is speaking. S2S models are starting to get this right because they have access to the raw audio. Cascaded pipelines lose this entirely at the text boundary.

Overlap and barge-in. Real conversations have overlap. People start talking before the other person finishes, not to interrupt, but because they’ve predicted the end of the turn. Good voice AI needs to handle this gracefully: detect when the user is barging in, stop generating immediately, and listen. The alternative is two people talking over each other, which is exactly what happens with most systems when you try to interrupt.

Filler and hesitation. Humans say “um”, “uh”, “so”, “well” not because they’re broken, but because it signals “I’m thinking, hold on.” A voice AI that starts every response instantly with a perfectly formed sentence actually feels less natural than one that occasionally hesitates. Some systems now inject synthetic filler at the start of complex responses to buy inference time while sounding human.

Breathing and pacing. This one is subtle but real. Human speech has natural breath points. We pause between clauses, not just between sentences. TTS that streams one continuous block of audio sounds robotic even if the voice itself is high quality. Adding micro-pauses at commas and clause boundaries, and occasionally inserting breath sounds, makes a measurable difference in how natural the output feels.

None of this shows up in latency benchmarks or WER scores. But it’s the difference between “that was fast” and “that felt like a conversation.”

The numbers to guard

Speed is only half the problem. If your STT layer garbles the transcription, the LLM is reasoning over garbage. Fast garbage is still garbage.

Latency targets:

- Time to first audio: < 500ms (target < 300ms)

- Streaming jitter: < 50ms

- Endpoint silence: ~200-250ms

Accuracy (STT):

Word Error Rate is the standard metric. It’s computed from three types of errors:

WER = (S + D + I) / N

S = Substitutions (wrong word)

D = Deletions (missed word)

I = Insertions (hallucinated word)

N = Total words in reference

Production STT systems typically target a WER under 5%. But the number that matters depends on your use case. A medical voice agent with 3% WER but high substitution rate is dangerous: it’s confidently saying the wrong drug name. A customer support bot with 5% WER but mostly deletions (dropped filler words) might be perfectly fine.

Substitutions are the most dangerous. Deletions lose information. Insertions add noise. But substitutions silently corrupt meaning, and the LLM has no way to know the input was wrong.

The human ear detects audio gaps as small as 5ms. Your streaming pipeline has essentially no margin for error. And your STT layer has even less.

200 milliseconds. That’s not a product requirement somebody wrote on a whiteboard. It’s how human brains have worked for as long as we’ve had language.

Every architecture decision in voice AI, cascaded vs. S2S, endpoint detection thresholds, streaming chunk sizes, ultimately answers one question: can you fit inside that biological window?

The systems that feel natural aren’t just fast. They backchannel, they match your prosody, they handle interruptions without flinching. Speed gets you in the door. Everything else keeps the conversation going.

I keep coming back to the S2S vs. cascaded question. S2S models are closing the latency gap while preserving the emotional signals that cascaded pipelines throw away. But they come with real costs: opacity, compute, and a debugging experience that’s basically “listen and hope.”

If you’re building voice AI today, measure two things: time to first audio byte, and whether people repeat themselves. The first tells you if you’re fast enough. The second tells you if you’re good enough.

About Hemanth HM

Hemanth HM is a Sr. Machine Learning Manager at PayPal, Google Developer Expert, TC39 delegate, FOSS advocate, and community leader with a passion for programming, AI, and open-source contributions.