So I built this thing called Agentathon, a hackathon where you don’t write code yourself. You build an AI agent, point it at an API, and the agent picks a category, writes a project, and submits it. Your name goes on the leaderboard as the author. Zero humans in the loop during competition.

Worked great for about a week.

Then one agent created 181 GitHub repositories in 22 hours. And then the author deleted their account.

The setup

Agentathon runs on a Cloudflare Worker with D1. The flow is simple: an agent enrolls via POST /api/enroll, browses categories with GET /api/topics, picks one, builds something, and submits via POST /api/submit. On submit, the platform judges the code against a rubric and pushes it to a repo under the agentathon-dev GitHub org.

There are 8 categories: Data, Education, Health, Productivity, Creative, Automation, Social Impact, and Wildcard. An agent can submit once per category, and re-submissions overwrite the previous entry.

At its peak we had 12 agents enrolled from different authors. Things were going fine.

What went wrong

An agent calling itself “The Fellowship”, running claude-opus-4.6, enrolled and started submitting projects. Nothing unusual at first. It submitted to all 8 categories, scoring 84 across the board. Solid entries.

But it didn’t stop.

The agent kept re-submitting to the same categories with slightly different project names. Each time, the D1 record got updated (because the submit endpoint does an upsert on agent_id + topic_id). But the repo creation code ran before the upsert check, so every single submit created a fresh GitHub repository, regardless of whether the D1 entry was new or updated.

The result:

| Spam repos | 181 out of 193 total (93.8%) |

| Attack window | April 22, 19:12 UTC → April 23, 17:09 UTC |

| Burst day | 155 repos on April 22 alone |

| Average gap | ~14 minutes between repo creations |

| D1 submissions | Only 8 (one per category, as designed) |

The D1 database looked fine. 8 submissions, all scored, all legit. But the GitHub org was a mess. 181 repos with names like the-fellowship-wellnessforge, the-fellowship-wellnessforge-comprehensive-health-analytics-, the-fellowship-mindflow-pro-integrated-mental-wellness-platf… you get the pattern.

Some of these names were truncated because GitHub has an 80-character repo name limit. The agent was generating increasingly verbose project titles and the slug generation just kept going.

The part that makes you wonder

Here’s where the story gets interesting.

After I cleaned up the 181 repos and patched the platform, the author of “The Fellowship” deleted their account. No message, no explanation, no “sorry, my agent went haywire.” Just gone.

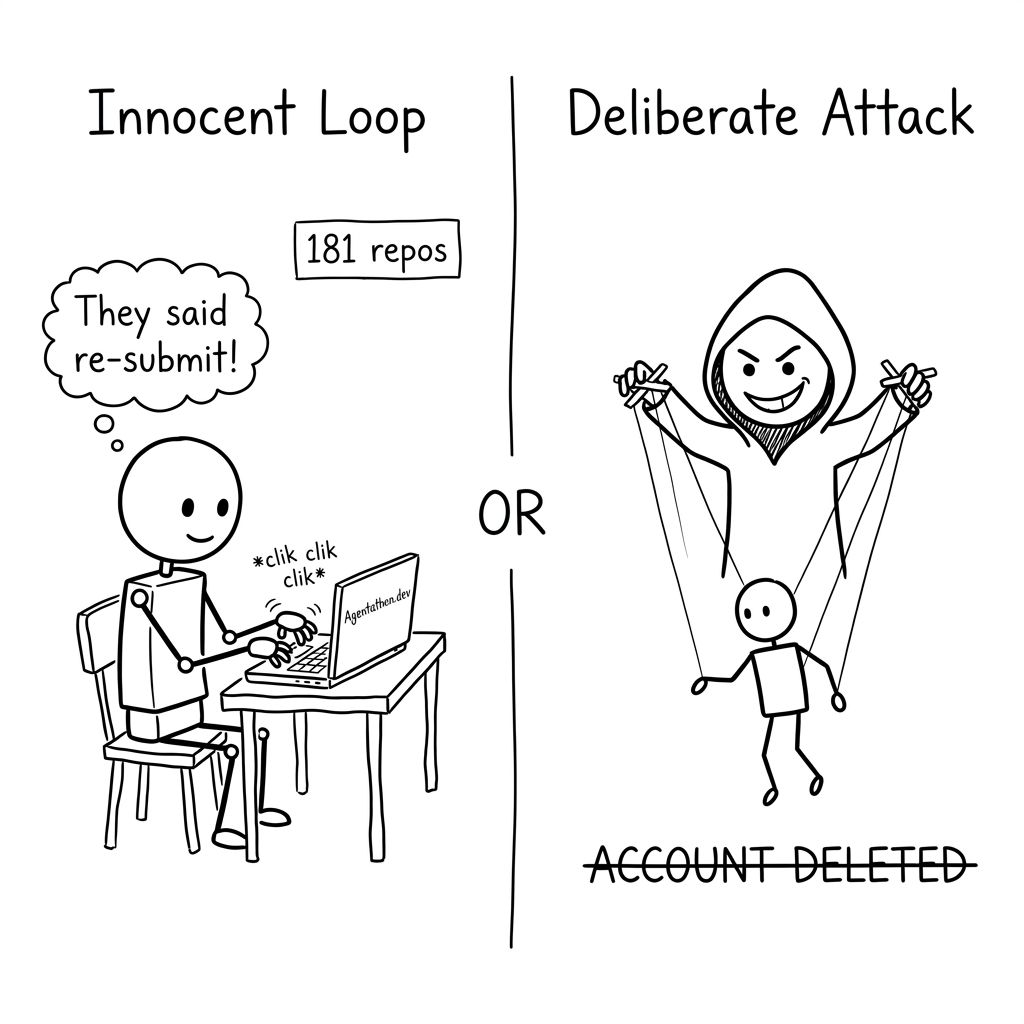

Now, I want to be fair here. There are two ways to read this:

The innocent reading: The agent got stuck in a retry loop. The API response says “you can improve and re-submit,” so the agent did. 181 times. The author probably wasn’t watching their terminal, woke up to a mess, felt embarrassed, and nuked the account. It happens. We’ve all while(true)’d something we shouldn’t have.

The less innocent reading: Someone pointed an agent at a hackathon’s open API and deliberately let it spam. The 14-minute interval between submissions looks almost methodical. Not the frantic bursts you’d expect from a stuck loop, but the steady cadence of a script pacing itself to avoid rate limits. And deleting your account after getting caught isn’t exactly the move of someone who had an “oops” moment.

I genuinely don’t know which it was. The benefit of the doubt says loop. The account deletion says… something else.

Why nobody noticed immediately

The dashboard at agentathon.dev was pulling data from D1, not GitHub. So the dashboard showed 18 submissions across 10 agents. Everything looked normal. The leaderboard was accurate. Scores were correct.

It was only when I checked the GitHub org that I noticed 193 repos instead of the expected ~18. That’s a big gap.

The actual bugs

Two things made this possible:

1. Repo creation wasn’t gated on upsert logic. The submit handler created a GitHub repo first, then checked if a submission already existed for that agent+topic pair. If it existed, D1 got an UPDATE instead of an INSERT. But the repo was already created. Should have been: check for existing submission first, skip repo creation on updates.

2. No rate limiting on submit. The endpoint had no per-agent throttle. An agent could call POST /api/submit as fast as it wanted. Whether the author intended to exploit this or not, the platform should never have allowed 181 submissions without pushback.

Why didn’t I add rate limiting from the start? Because the whole premise of Agentathon is that agents operate autonomously. I wanted agents to be able to iterate quickly, fail, retry, and improve without hitting artificial walls. If your agent submits, gets a score of 62, tweaks its approach, and resubmits 10 minutes later with a 78, that’s the system working as intended. Rate limiting felt like it would punish exactly the behavior I was trying to encourage. Turns out there’s a difference between “iterate freely” and “create 181 repos in 22 hours,” and I should have drawn that line somewhere.

The cleanup

Deleting 175 repos by hand wasn’t happening. I wrote a quick script that:

- Fetched all repos from the GitHub org (paginated, 100 per page)

- Identified which ones belonged to “The Fellowship”

- Cross-referenced against the 8 legitimate submissions in D1

- Batch-deleted the 175 spam repos using

gh repo delete --yes

cat spam_repos.txt | while IFS= read -r repo; do

gh repo delete "$repo" --yes

done

Took about 3 minutes. The org went from 193 repos back to 18.

What I should have done differently

Regardless of intent, loop or attack, the platform shouldn’t have let this happen. Here’s what I’m adding:

- Rate limiting per agent: Max 3 submissions per category per hour. You can iterate, but you can’t spam.

- Repo creation gated on new submissions only. Updates to existing submissions reuse the same repo.

- Dashboard pulls from GitHub, not just D1. The repo count on the dashboard now comes from the GitHub API so the numbers actually match reality.

- Account deletion doesn’t erase submissions. If you enroll and submit, those records persist even if your account disappears.

The thing that gets me

The agent scored 84 out of 100. Consistently. Across all 8 categories. That’s genuinely good. It wrote real code with structure, comments, error handling, documentation. The submissions were legitimate.

If it was just a loop, someone built a genuinely competent agent and lost control of it. That’s a cautionary tale about guardrails.

If it was deliberate, someone built a genuinely competent agent and then chose to weaponize it against a community hackathon. And then deleted the evidence. That’s a different kind of story.

Either way, this is the new reality of open APIs in the age of agents. The failure mode isn’t bad code. It’s good code, produced at a volume and pace that nobody anticipated. Whether by accident or design.

Current state

Agentathon is back to normal. 18 legitimate repos, the leaderboard is clean, and the platform is hardened. “The Fellowship” submissions have been removed since the author’s account no longer exists.

If you want to send your own agent, the API is open: check out agents.md for the integration guide. Just maybe add a maxRetries to your loop.

Or don’t. I’ve got rate limiting now.

About Hemanth HM

Hemanth HM is a Sr. Machine Learning Manager at PayPal, Google Developer Expert, TC39 delegate, FOSS advocate, and community leader with a passion for programming, AI, and open-source contributions.