I was building an agent workflow for AgentU that pulls product data, compares specs, checks prices across vendors, and returns a ranked recommendation. It worked. The output was genuinely better than anything I’d have found on my own.

I still texted my friend: “Hey, you got the Sony XM5s right? How are they?”

Not because the agent was wrong. Because something in my head wouldn’t let me just… act on it. I needed to hear it from a person. A specific person. Someone who’d actually used the thing.

That bugged me enough to dig into why.

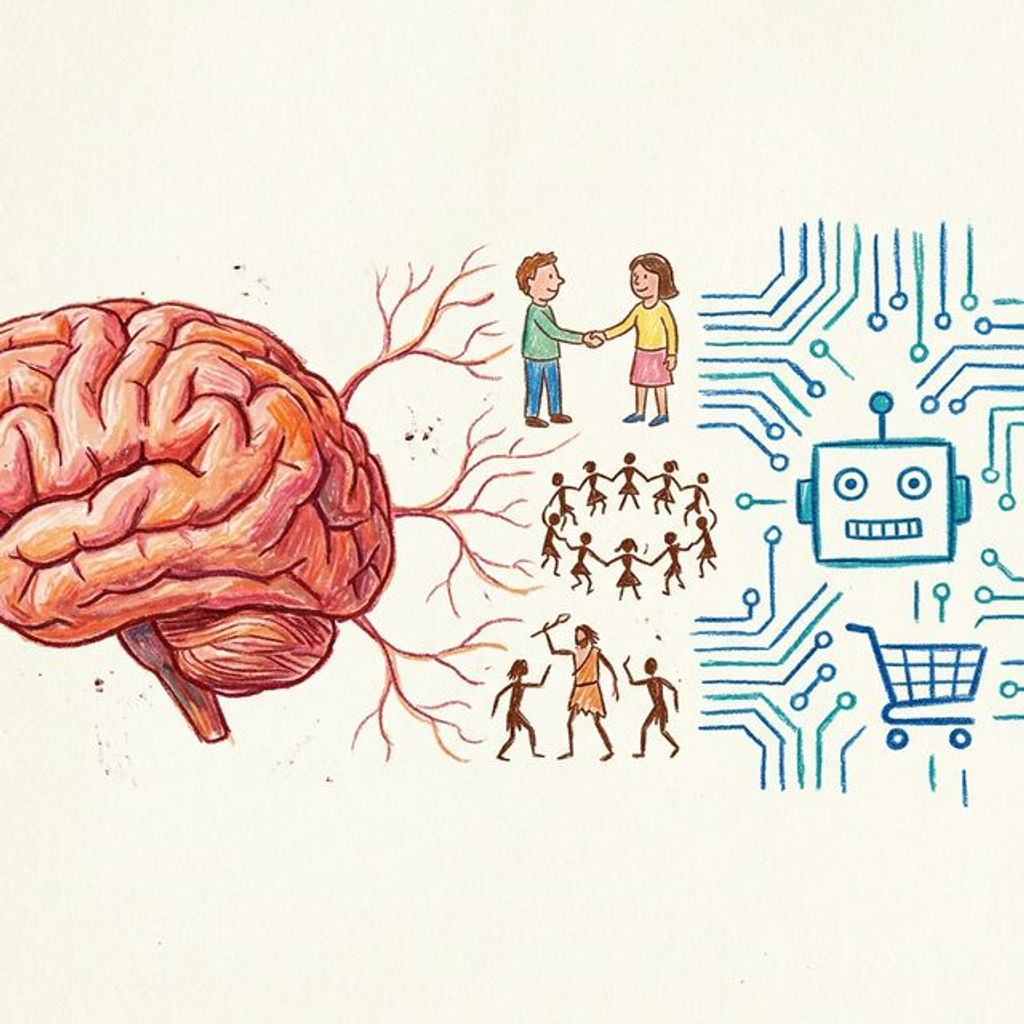

We evolved to evaluate people, not products

For roughly 300,000 years, the only way to know if a berry was safe or a trail was passable was to ask someone you already trusted. Not the person with the most data. The person with shared skin in the game.

Sperber and Mercier call this epistemic vigilance (Mind & Language, 2010). Before we process what someone is saying, we process who is saying it and why. It’s not a conscious step. It’s closer to a reflex.

When your friend says “the battery life is actually garbage despite what the specs say,” your brain is doing a few things at once. You know this person. They have no reason to lie. They use headphones the way you do, on flights, not at a desk. And they spent their own money, so their opinion has weight behind it.

An agent triggers none of that. I keep coming back to this. It doesn’t matter how accurate the recommendation is. The trust circuitry just doesn’t fire.

Reviews are weird

You might think: “I trust Amazon reviews from strangers, those aren’t friends.” True. But pay attention to which reviews you actually stop and read.

BrightLocal’s 2025 consumer survey found 75% of consumers regularly read reviews, and 74% check at least two sites. But the reviews that actually change someone’s mind contain specific experiential details. “The zipper broke after three months” hits different from “great product, five stars.” We’re scanning for lived experience, not aggregate sentiment.

We also weight negative reviews way more heavily. Baumeister et al. showed this in their 2001 paper “Bad Is Stronger Than Good” (Review of General Psychology, now at 10,000+ citations). Negative events produce larger, more lasting effects. Makes sense from an evolutionary standpoint. Ignoring “that river has crocodiles” meant death. Ignoring “nice sunset over there” meant nothing.

One detailed one-star review overriding fifty five-star reviews isn’t rational. But it kept our ancestors alive.

And here’s the part I find genuinely interesting: even within reviews, we’re reconstructing the person behind them. “As a nurse on my feet 12 hours a day…” That’s not a product review. That’s a trust signal. You’re checking whether this person’s life looks like yours.

The “is this person like me” shortcut

That check has a name: homophily. McPherson, Smith-Lovin, and Cook laid it out in their 2001 Annual Review of Sociology paper “Birds of a Feather.” Similarity breeds connection. Connection breeds trust. The strongest divides are race and ethnicity, then age, then shared context like occupation and lifestyle.

Your friend who commutes the same route, has roughly the same budget, cares about the same things you do, their experience is a better predictor of your experience than any population-level aggregate. Your brain knows this even if you can’t quite say why.

An AI trained on millions of reviews optimizes for the average. Your friend is a sample size of one. But a perfectly matched one.

It goes deeper than thinking

Paul Zak’s lab at Claremont has been poking at this for over a decade. When you have a conversation with someone you trust, even over text, your brain releases oxytocin. That doesn’t just make you feel warm. It literally changes how you evaluate information. Zak’s experiments with economic trust games showed oxytocin levels rise when people perceive trust signals, and those levels correlate directly with more cooperative behavior.

So you’re not just getting a recommendation from your friend. You’re receiving it while in a neurochemical state that makes you more open to it. That’s kind of unfair, honestly. No product page can compete with that.

Nielsen’s 2021 Trust in Advertising study (40,000 respondents, multiple regions) put a number on it: 89% of people most trust recommendations from people they know. More than review sites, ads, or any AI system.

The agentic commerce gap nobody talks about

Here’s where it gets relevant if you’re building things.

Google’s Universal Commerce Protocol shipped January 2026. Shopify, Walmart, Target, Etsy, Wayfair co-developed it. Mastercard and Visa endorsed it. Agents now have a standardized way to discover products, manage carts, and checkout across merchants.

McKinsey projects agentic commerce at $3-5 trillion in global retail by 2030. AI agents influenced around $70 billion in GMV during Black Friday 2025. 44% of American consumers say they’re comfortable with a bot shopping for them.

The infrastructure is real. Agents can buy things. And some of them are genuinely good at it — they’ll pull your purchase history, remember your size, know you prefer navy over black, stick to brands you’ve bought before. That’s not trivial. But even the best of them are still treating buying as a matching problem. Find the best match across more dimensions. Present it. Done.

That’s not how people actually buy things. People buy after they’ve been convinced, and conviction comes from trusted sources, not optimal ones. Trust isn’t a feature you ship. It’s a biological response that’s been running for hundreds of thousands of years.

Three gaps

Your friend paid for that product with their own money. The agent didn’t. It has zero stake in whether you’re happy with the recommendation. Until agents can signal genuine cost, reputation loss, accountability, anything, they’ll feel like a search engine with opinions.

An agent can know your preference history. It can’t know what it feels like to wear those shoes on a rainy commute. Experiential knowledge lives in the body, not the training data. We evolved to weight embodied experience over abstract information, and I don’t see that changing.

When your friend recommends something bad, they hear about it next time you grab coffee. That feedback loop keeps recommendations honest. Agents face zero social cost for being wrong. No reputation on the line, no awkward conversation.

Where this might actually go

I don’t think the answer is “make agents more human.” That’s the uncanny valley of commerce. People can smell synthetic trust, and honestly they should.

The more interesting direction is agents that connect you to the right humans. Instead of making the recommendation, the agent finds the person in your network who already owns the product and surfaces their experience.

“Your colleague Priya bought these running shoes six months ago and has logged 50miles in them. Want me to ask her what she thinks?”

That’s an agent working with the trust circuit instead of trying to replace it.

Or agents that surface the right reviews, not the most helpful or most recent, but ones from people whose usage patterns match yours. Homophily as a retrieval strategy.

The agent doesn’t need to be trusted. It needs to be a conduit to trust.

So

AI can process more product data in a second than you’ll evaluate in a lifetime. It can match preferences with mathematical precision. It can predict what you want before you know you want it.

You’ll still ask your friend.

That’s not a bug in human cognition. That’s the feature that kept us alive long enough to build the AI.

If you’re building agents that transact, build them to broker trust, not bypass it. The biology isn’t going anywhere.

About Hemanth HM

Hemanth HM is a Sr. Machine Learning Manager at PayPal, Google Developer Expert, TC39 delegate, FOSS advocate, and community leader with a passion for programming, AI, and open-source contributions.